The trillion-dollar question for AI business models

There is a sobering truth about artificial intelligence: It is not yet profitable. Money is pouring into AI, and funds flow through it – for the time being. But when one examines AI companies’ profits, clear data is hard to find.

The numbers are interesting: Private AI investments in the United States hit $109.1 billion in 2024, about 12 times China’s $9.3 billion and 24 times the United Kingdom’s $4.5 billion. This capital concentration reflects both the perceived strategic value of AI capabilities and the substantial infrastructure requirements for developing and deploying large language models at scale. However, the relationship between investment and profitability remains unclear.

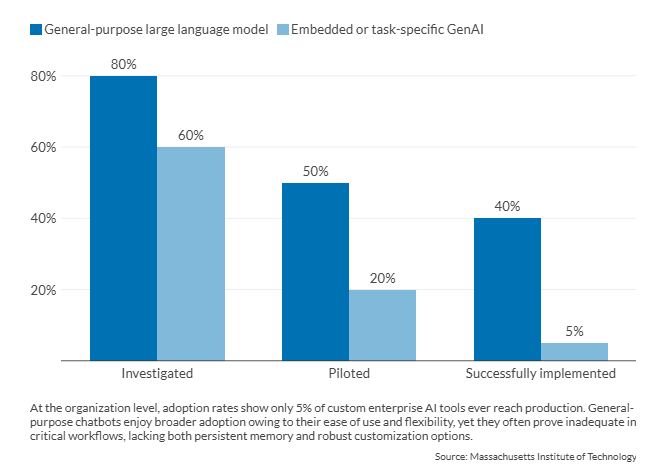

OpenAI reported a $5 billion loss in 2024 on approximately $3.4 billion in revenue, with projections indicating an $8 billion loss in 2025. Anthropic, another major player in the foundation model space, lost $5.3 billion in 2024 on revenues of $918 million. While examples of profitability – such as Nvidia – do exist, they are quite rare. These financial metrics indicate that current AI business models face fundamental challenges. A 2025 Massachusetts Institute of Technology study on enterprise AI adoption found that 95 percent of organizations implementing AI technologies reported no measurable return on investment.

Facts & figures: Organization-level AI adoption rates

If this is the case, why do entrepreneurs still invest in AI? And what will the trillion-dollar AI business model look like? Currently, there are three distinct sectors of AI development and application: One is clearly for-profit, one is non-profit and the last is state-subsidized.

For-profit AI may need non-profit lifelines

The investments needed to develop and deploy large language models present challenges for traditional for-profit business models. The costs of training frontier models have reached billions, while inference costs – the computational resources needed to generate responses to user queries – create ongoing operational burdens that scale with usage. OpenAI’s financial data reveals that inference costs alone accounted for 50 percent of revenues in 2024, while training costs accounted for an additional 75 percent. This cost structure, in which operational expenses exceed 100 percent of revenue before accounting for other business costs, indicates a fundamental pricing problem.

Facts & figures: What is AI inference cost?

AI training is the process through which a neural network or AI model learns to perform a specific task. Inference is the process of applying a model trained on real-world data to generate outputs, such as predictions or decisions. This stage prioritizes speed and efficiency, often leveraging techniques like speculative decoding, quantization and pruning. These methods boost performance while maintaining accuracy.

As models become more complex – particularly advanced AI reasoning systems – they demand greater computational resources for inference. The costs of AI inference primarily stem from the computing power required to run sophisticated models, especially deep learning architectures. Understanding and managing these expenses is essential for the sustainable development of AI technologies.

The unit economics become clearer when examining customer-facing applications. Cursor, an AI-enabled coding assistant that uses Anthropic’s Claude models, reportedly allocates 100 percent of its subscription revenue to Anthropic for model access while remaining unprofitable. Perplexity, an AI-powered search engine, spent 164 percent of its 2024 revenue on computing costs from Amazon Web Services, Anthropic and OpenAI.

These examples illustrate a value chain where neither the application layer nor the model provider layer achieves profitability at current pricing levels.

Pricing constraint stems from competitive dynamics and user expectations. Early AI companies subsidized their services to build user bases, setting prices that did not reflect actual costs. To achieve cost recovery, many companies would need to increase their prices by several hundred percent, which could lead to a loss of users and create a competitive disadvantage. This situation results in competitive price rigidity, where companies cannot raise prices without losing market share, yet they also struggle to achieve profitability at current prices.

The early pricing decisions have created a path dependency with significant implications. If the leading AI companies are unable to achieve profitability at scale, it raises concerns about the economic viability of developing AI models as a standalone commercial activity. In fact, some experts believe that as many as 90 percent of AI-related companies, particularly startups, will fail.

This raises questions about whether AI development requires alternative funding models, either through cross-subsidization from other business lines, non-profit structures or direct government support.

Infrastructure costs and the strategic asset thesis

The physical infrastructure requirements for AI development are creating a new category of capital expenditure. It is estimated that power demand from AI data centers in the U.S. will increase from 4 gigawatts (GW) in 2024 to 123 GW by 2035, representing a 30-fold increase over 11 years. The largest data centers currently in planning stages require up to 2 GW of power, equivalent to the output of a large nuclear power plant. This power requirement creates reliance on energy infrastructure that is beyond the control of individual companies.

Global capital expenditure on data center infrastructure is projected to reach $7 trillion by 2030. This figure encompasses construction, power infrastructure, cooling systems and networking equipment. The scale of this investment approaches that of infrastructure spending typically associated with national priorities, such as transportation networks or power grids. OpenAI’s commitment to invest $300 billion in computing power with Oracle over five years illustrates the capital intensity at the company level.

The infrastructure challenge extends beyond capital costs to include permitting, power availability and supply-chain constraints. A 2025 survey of power company and data center executives found that 72 percent identified grid capacity as a very or extremely challenging constraint on data center development.

Interconnection queues (or backlogs) for new power generation projects now extend seven years in some regions, creating a bottleneck that cannot be resolved through private investment alone.

These infrastructure constraints are prompting a reassessment of AI’s strategic significance. In the third quarter of 2025, an industry analysis noted that “data centers transitioned from private utilities to instruments of national power,” reflecting a shift in how governments perceive AI infrastructure.

This transition is driven by three factors: the scale of capital required, the strategic importance of AI capabilities for economic competitiveness and the infrastructure dependencies that extend into energy and telecommunications policy.

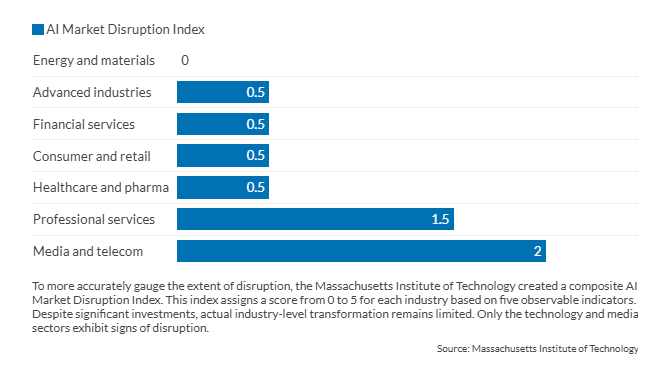

Facts & figures: AI disruption varies sharply by industry

State-subsidized models and sovereign AI

The concept of sovereign AI has emerged as governments seek to develop indigenous AI capabilities using domestic infrastructure, data and workforce. This model treats AI development as a strategic national priority rather than a commercial market activity. The approach reflects concerns about technological dependence, data sovereignty and the economic and security implications of relying on foreign AI systems.

Public-sector engagement appears in multiple forms. Direct investment in data centers and computing capacity is the most visible form of support. Saudi Arabia, for example, is positioning itself as a regional AI hub, with PwC estimating that the Middle East could capture $320 billion in economic value from AI deployment.

The U.S. has fast-tracked federal permitting procedures for data center infrastructure. Additionally, America’s AI Action Plan clearly identifies high-quality data as a vital national strategic asset. Meanwhile, the European Union and European Investment Bank are joining forces to finance and roll out European data centers to “bolster EU technological independence and competitiveness.”

Public-private partnerships are another model for state involvement. These structures combine government funding, participation from development finance institutions and private-sector expertise to reduce risks associated with large infrastructure projects. This blended-finance approach allows governments to benefit from the private sector’s efficiency while retaining strategic control over critical infrastructure. This model is becoming particularly prevalent in regions where private capital alone cannot meet the necessary investment scale.

The shift toward government involvement is also evident in corporate restructuring. OpenAI’s transition from a non-profit to a for-profit public benefit corporation, with Microsoft owning a 27 percent stake while the original non-profit retains a $130 billion stake, exemplifies a hybrid model that combines the ability to raise commercial capital with mission-driven governance. This structure acknowledges that pure non-profit models often encounter capital constraints, while pure for-profit models are pressured to prioritize financial returns over other objectives.

The trend of sovereign AI raises important questions about the future structure of the AI industry. If AI infrastructure relies on government support to reach the necessary scale, the industry may evolve into a model similar to other strategic sectors, like aerospace, semiconductors or telecommunications, where government involvement is common. This would mark a departure from the predominantly commercial structure of the software industry and could affect innovation dynamics, international collaboration and technology transfer.

Scenarios

Most likely: The hybrid ecosystem

In the most likely scenario, for-profit companies, non-profit research institutions and government-backed entities coexist in a differentiated market structure. For-profit companies focus on application-layer services and specialized models for specific industries, drawing on foundational models from larger entities. Non-profit institutions conduct fundamental research and explore safety, ethics and societal implications. State-backed entities develop sovereign capabilities and ensure national competitiveness.

This model would maintain competitive dynamics while acknowledging the need for state involvement in foundational infrastructure. International tensions could arise if state-backed entities compete directly with commercial companies in third markets. This scenario is the most likely because it continues the current path.

It has pitfalls. For example, private actors are incentivized to lobby governments to take on more costs as “foundational.” Or governments will want to intervene on “antitrust” grounds against “overly” AI corporations.

Finally, in this scenario, some countries delegate more to their governments (China); others regulate (the EU) while others prioritize market forces (the U.S.).

Less likely: The competition model

While somewhat possible, this is not the most likely scenario; it is, however, the best case. The regulatory frameworks remain minimal, allowing market forces to drive AI development through competition rather than public-sector coordination. In this scenario, innovation emerges from distributed sources – start-ups, research labs, established technology companies and specialized firms – rather than being concentrated in state-backed entities. The absence of heavy-handed regulation enables rapid experimentation with business models, technical architectures and applications. Companies compete on performance, cost and deployment speed, encouraging continuous innovation.

Private companies establish partnerships with government entities, especially in defense and national security applications, without requiring comprehensive state control over AI infrastructure. These collaborations operate on a contractual basis, with private companies developing capabilities that serve both commercial and government markets.

Defense contractors and technology companies accelerate product development cycles by competing for government contracts while maintaining the flexibility to pursue commercial opportunities. This dual-use approach helps companies spread out research and development costs over multiple revenue streams.

This scenario would likely produce faster innovation cycles and greater diversity in technical approaches, as companies pursue differentiated strategies to gain a competitive advantage.

The risk profile differs from state-controlled models: Market failures and company bankruptcies would be more common, but the distributed nature of development would lessen single points of failure. The challenge lies in coordinating on safety standards and interoperability without formal regulatory mechanisms, relying instead on industry consortia and market incentives for standardization.

Unlikely: The consolidated utility model

The development of AI infrastructure is concentrated in a few state-backed entities that offer foundational models and computing capacity as regulated services. This structure mirrors that of public utilities in sectors such as electricity, water and telecommunications. It allows for the sharing of capital costs associated with AI development through government funding or regulated cost recovery, while private companies create applications and services using this foundational infrastructure.

This scenario is unlikely because it requires substantial cooperation among the various actors involved. Additionally, individual actors have the incentive to break free from this model as soon as they sense potential private gains. The model could stifle innovation in the AI sector by effectively regulating the business cases and applications for AI. This scenario would lead to an AI divide, since administrations that succeed in regulating AI effectively and provide it with sufficient resources would be far ahead of those with poor regulations or fewer resources.

This report was originally published here: https://www.gisreportsonline.com/r/ai-business-models/