This vision stems from the EU’s influence as a significant market that can introduce regulatory protectionism for goods and services not meeting European standards. However, as Europe’s economic strength wanes relative to other regions, this advantage also recedes. Regulatory protectionism risks stifling competition, thereby inhibiting productivity, innovation and growth.

Artificial intelligence: Regulations vs. innovation

The EU’s latest AI draft legislation risks stifling innovation in Europe and opens the door to misuse by governments.

The European Parliament has recently endorsed preliminary legislation referred to as the AI Act, geared toward the regulation of artificial intelligence.

Much like the financial industry’s regulations, this legislation adopts a “risk-based approach.” This method prioritizes areas where the most significant harm could potentially occur. The legal system, public services access, and notably, critical infrastructure are deemed particularly high-risk areas. However, the risk-based approach comes with its own challenges. It tends to create an illusion of risk mitigation based on past experiences and existing classifications rather than allowing flexibility to anticipate future risks. Moreover, it struggles to keep pace with rapid innovation, potentially stifling it unless carefully managed.

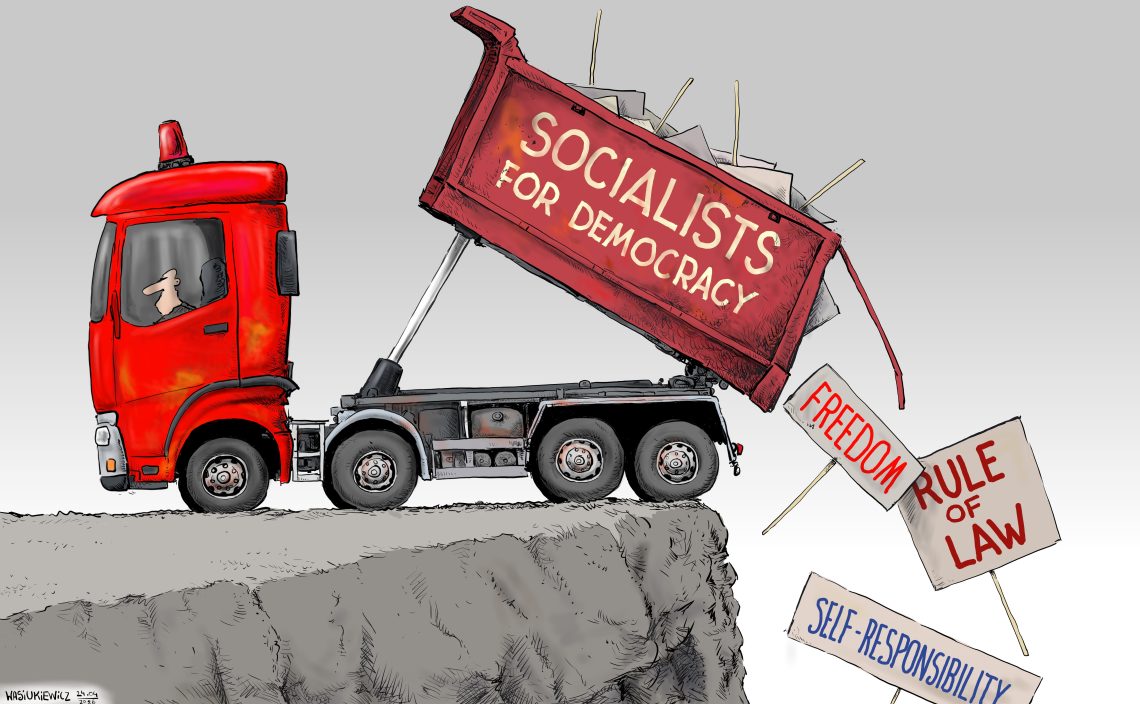

The Act also addresses the crucial matter of citizens’ data protection and privacy rights. While it claims to protect these rights in areas such as facial recognition, it also opens the door for government intrusion under the guise of crime prevention. Unless such measures are individualized and subject to judicial oversight, they risk undermining the very purpose of protection. Concerns over potential misuse by nearly all governments are very much warranted in such circumstances.

Regulations to take effect in Jan 2024

The rise of generative AI, such as ChatGPT, has underscored the need for prompt action. This preliminary legislation will now undergo the EU’s legislative process and require final approval from the European Council, the member states’ representative body. It is slated to take effect in January 2024.

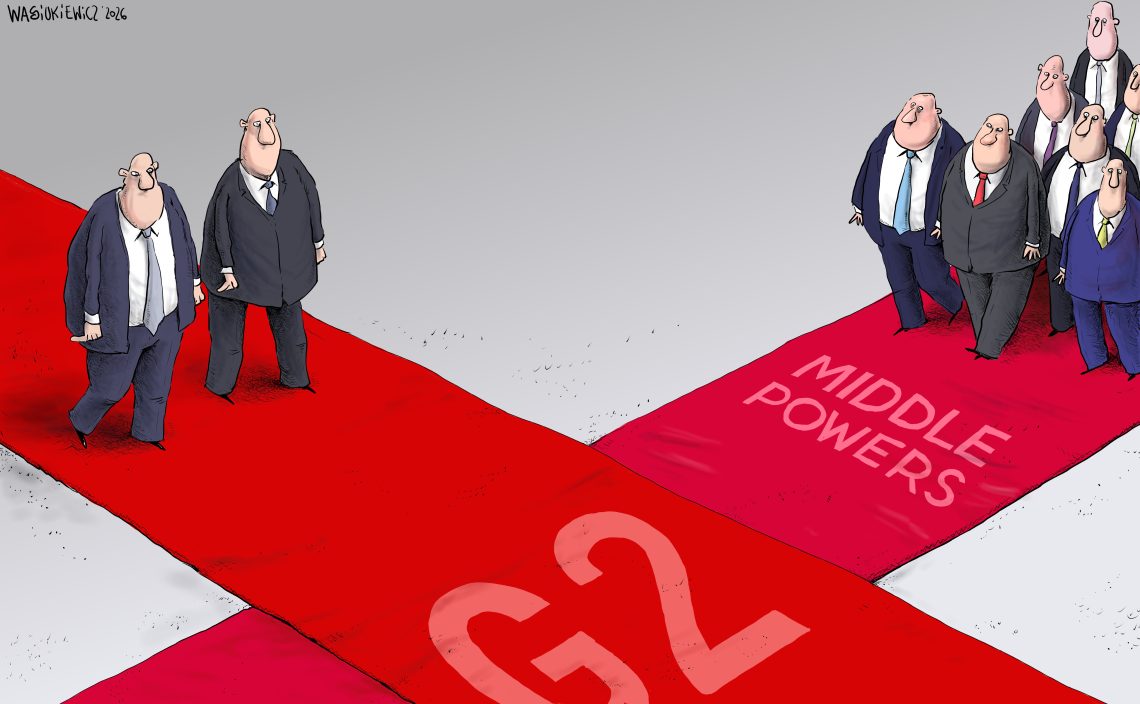

Europe, once a leader in soft power and still a significant player, yearns to counterbalance its relative decline in global political and economic influence, along with its lack of robust defense capabilities. Some envision this through becoming a so-called “regulatory superpower.”

While some regulations, even in AI, are necessary, it is also evident that overly stringent regulation has hindered areas of critical future sectors, like biotech, including CRISPR technology. In such cases, Europe lags behind. Considering past experiences, another possible motive for this legislation could be to hinder the growth of foreign Big Tech, especially firms from the United States.

While the European Parliament might have good intentions, there is a genuine concern that excessive regulation and protectionism could result in unintended consequences. Europe has a good chance of becoming a pioneer in AI regulation but risks forfeiting its potential to be a leader in innovation.

This comment was originally published by GIS: